About me

I am a Research Faculty in the Department of Civil and Environmental Engineering (CEE)-Maryland Transportation Institute (MTI), University of Maryland, College Park (UMD). I am honored to work under the supervision of Dr. Xianfeng (Terry) Yang and be part of the Maryland Transportation & Artificial Intelligence Lab (M-TRAIL).

Previously, I received my Ph.D. in Electrical and Computer Engineering from the University of Arizona in 2025, where I was fortunate to be advised by Dr. Siyang Cao in the UA Radar Group. From 2019 to 2021, I worked at the Artificial Intelligence Research Center, Peng Cheng Laboratory (PCL). I earned my M.E. in Integrated Circuit Engineering from Peking University in 2019 and my B.S. in Applied Physics from Northeast Petroleum University in 2016.

My research aims to turn raw sensor data into dependable intelligence for the physical world, with applications in autonomous driving, intelligent transportation systems, and smart robotics. Interests include:

📸 Sensor Data Processing

Multi-sensor fusion and calibration across Camera · Radar · LiDAR · GNSS, with attention to spatial alignment, time synchronization, uncertainty modeling, and long-term stability.🎯 Deep Learning-based Perception

Deep models for object classification, detection, and multi-object tracking (MOT), with the goal of achieving real-time, reliability-aware perception in uncertain and dynamic environments. I also seek to develop perception-driven prediction, control, and planning strategies that seamlessly translate perception into action, ultimately supporting proactive, safety-critical decisions at system scale.🔀 Multimodal Deep Learning

Vision-Language Models (VLMs) and Representation Learning that integrate information from multiple sensors and modalities (e.g., vision, language, and spatial signals) to couple low-level perception with high-level semantics and reasoning for comprehensive environmental understanding.

Research theme. From raw, heterogeneous sensors to reliable, quantitatively validated situational awareness for the real world.

📢 News

- [11/2025] — 🎉 Paper accepted to IEEE Transactions on Instrumentation and Measurement (T-IM).

- [10/2025] — 🎉 Paper accepted to IEEE Transactions on Intelligent Transportation Systems (T-ITS).

- [10/2025] — 🏆 Unlocked first GitHub Achievement Starstruck: Created a highly-starred repository.

- [08/2025] — 🏆 Joined the University of Maryland, College Park (UMD) as a Faculty Assistant.

- [06/2025] — 🌟 Featured in the ECE Class of 2025 Spotlight at the University of Arizona.

- [05/2025] — 🎓 I defended my dissertation and graduated from the University of Arizona!

- [01/2025] — 🎉 Paper accepted to IEEE Transactions on Radar Systems (TRS).

- [07/2024] — 🎉 Paper accepted to IEEE Transactions on Intelligent Transportation Systems (T-ITS).

- [05/2023] — 🎤 Presented a paper at the 2023 IEEE Radar Conference (RadarConf23).

Looking for collaborators and open-source contributors? Ping me on email or GitHub!

🔬 Selected Projects

Drone-based Workzone Safety Alert System

Abstract

A drone-based work-zone safety alert system was developed, integrating state-of-the-art deep learning and computer vision to enable real-time traffic monitoring and proactive warnings.

Deep Learning-Based Online Automatic Targetless LiDAR–Camera Calibration

Abstract

Accurate multi-sensor calibration is essential for deploying robust perception systems in applications such as autonomous driving and intelligent transportation. Existing LiDAR-camera calibration methods often rely on manually placed targets, preliminary parameter estimates, or intensive data preprocessing, limiting their scalability and adaptability in real-world settings. In this work, we propose a fully automatic, targetless, and online calibration framework, CalibRefine, which directly processes raw LiDAR point clouds and camera images. Our approach is divided into four stages: (1) a Common Feature Discriminator that leverages relative spatial positions, visual appearance embeddings, and semantic class cues to identify and generate reliable LiDAR-camera correspondences, (2) a coarse homography-based calibration that uses the matched feature correspondences to estimate an initial transformation between the LiDAR and camera frames, serving as the foundation for further refinement, (3) an iterative refinement to incrementally improve alignment as additional data frames become available, and (4) an attention-based refinement that addresses non-planar distortions by leveraging a Vision Transformer and cross-attention mechanisms.

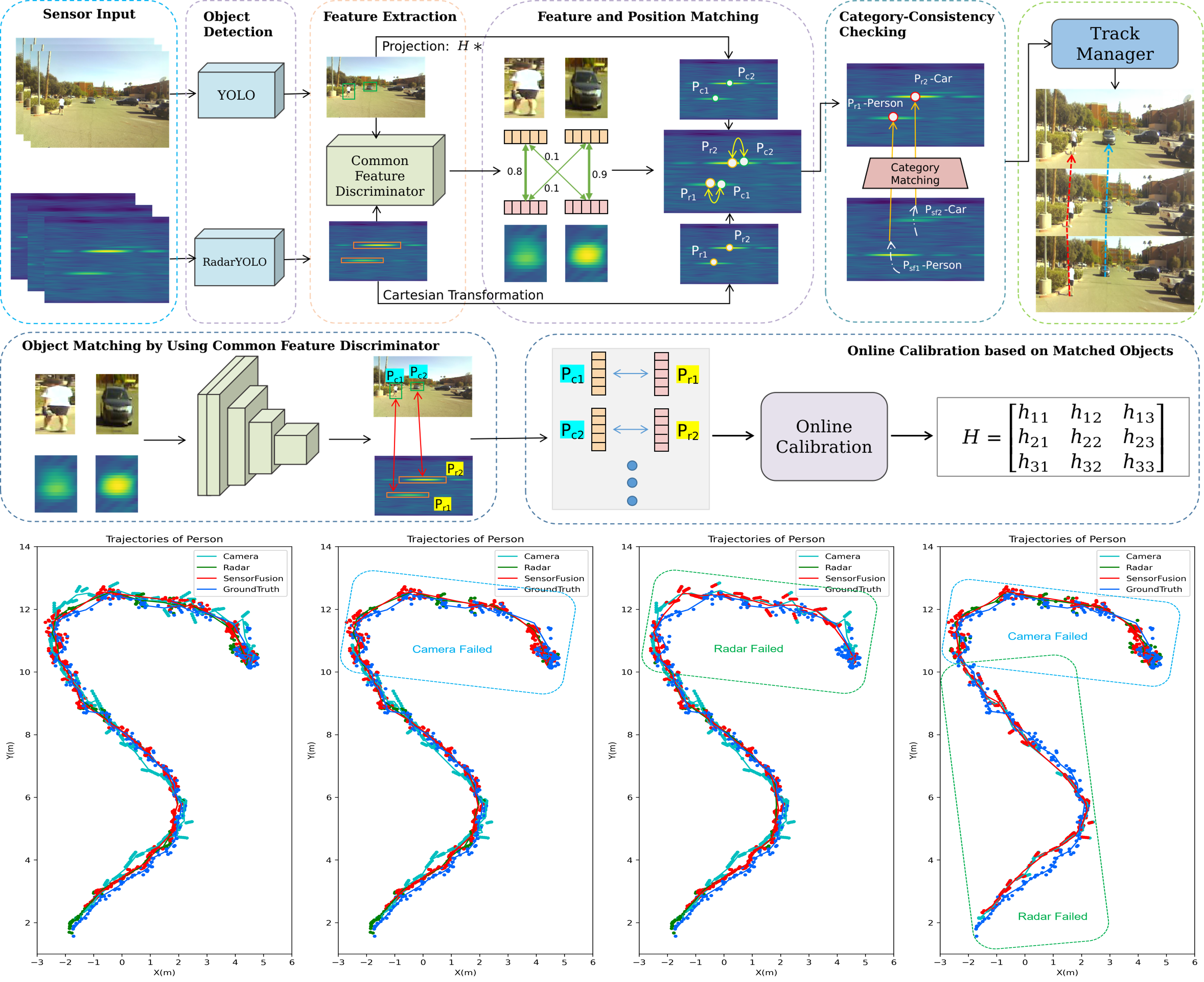

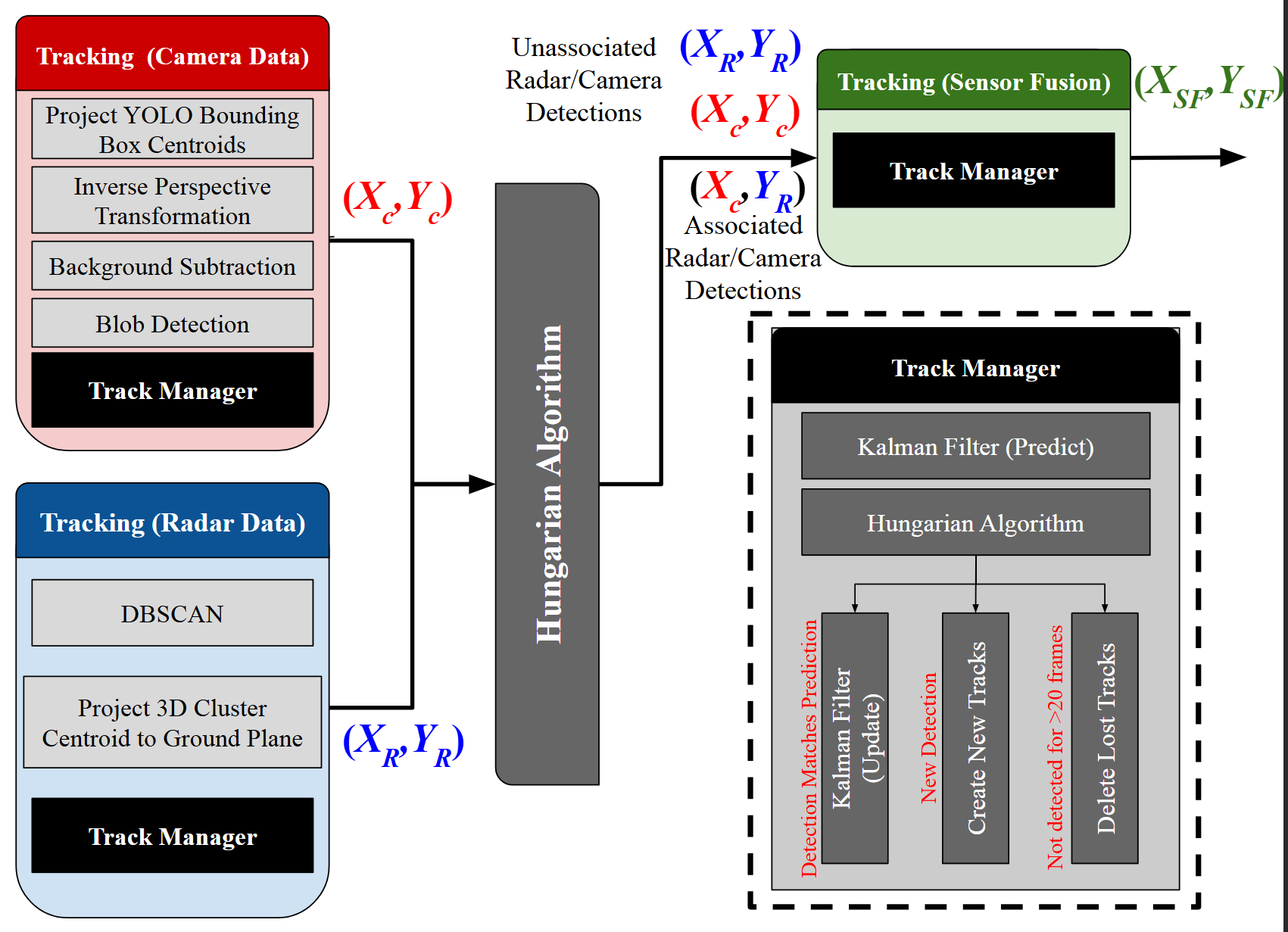

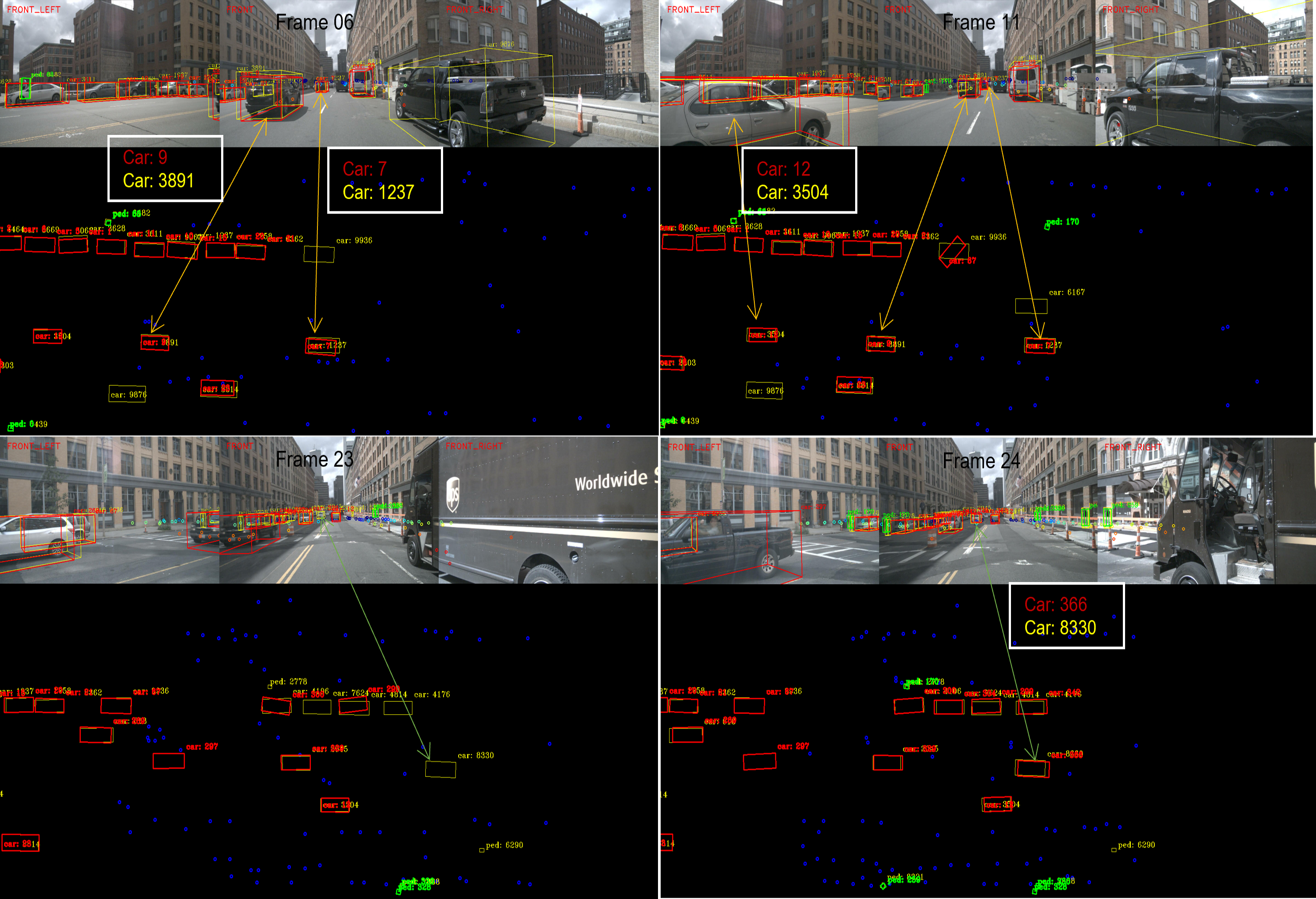

Radar-Camera Fused Multi-Object Tracking_Online Calibration and Common Feature

Abstract

This paper presents a Multi-Object Tracking (MOT) framework that fuses radar and camera data to enhance tracking efficiency while minimizing manual interventions. Contrary to many studies that underutilize radar and assign it a supplementary role—despite its capability to provide accurate range/depth information of targets in a world 3D coordinate system—our approach positions radar in a crucial role. Meanwhile, this paper utilizes common features to enable online calibration to autonomously associate detections from radar and camera. The main contributions of this work include: (1) the development of a radar-camera fusion MOT framework that exploits online radar-camera calibration to simplify the integration of detection results from these two sensors, (2) the utilization of common features between radar and camera data to accurately derive real-world positions of detected objects, and (3) the adoption of feature matching and category-consistency checking to surpass the limitations of mere position matching in enhancing sensor association accuracy. To the best of our knowledge, we are the first to investigate the integration of radar-camera common features and their use in online calibration for achieving MOT. The efficacy of our framework is demonstrated by its ability to streamline the radar-camera mapping process and improve tracking precision, as evidenced by real-world experiments conducted in both controlled environments and actual traffic scenarios.

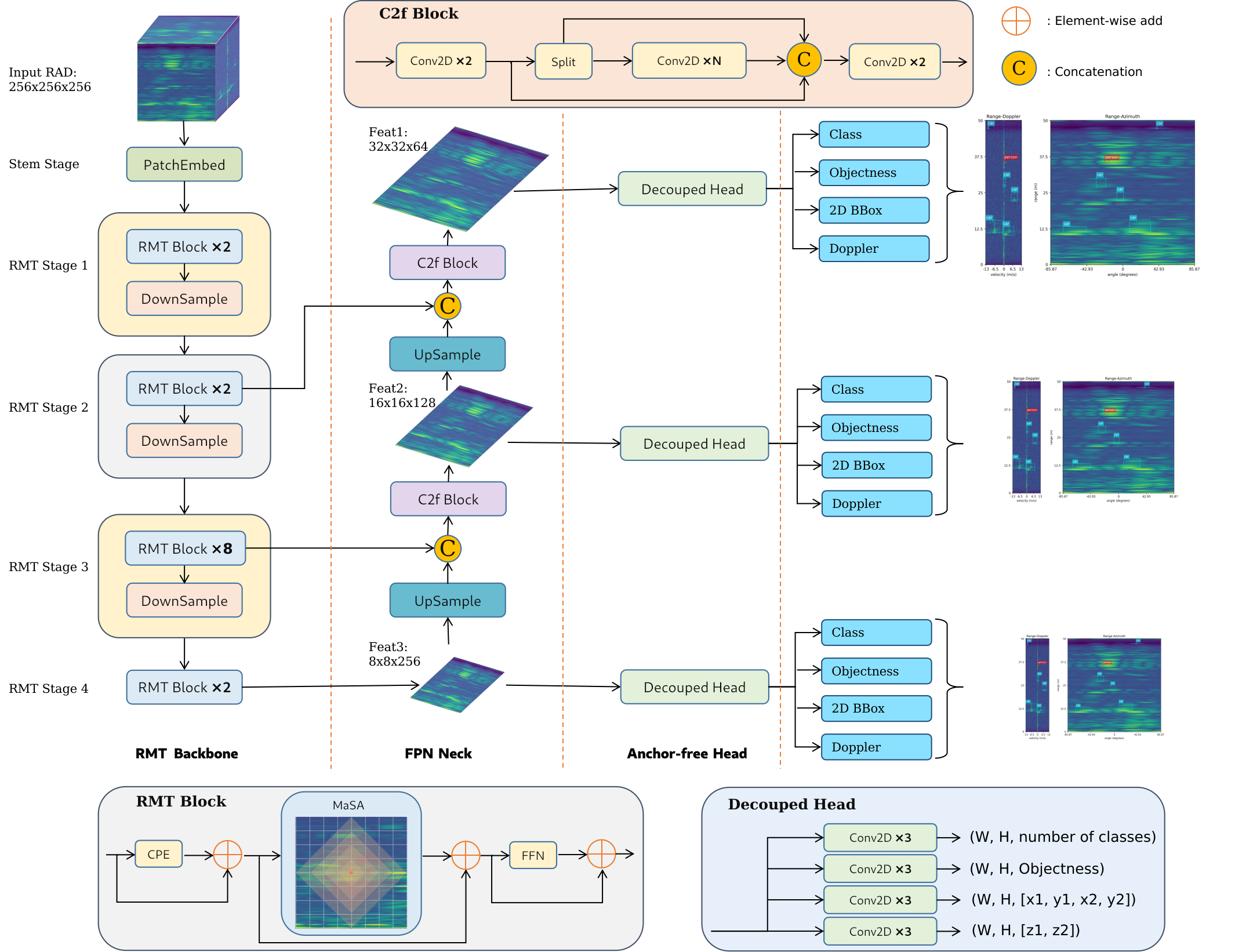

Retentive Vision Transformer for Enhanced Radar Object Detection

Abstract

Despite significant advancements in environment perception capabilities for autonomous driving and intelligent robotics, cameras and LiDARs remain notoriously unreliable in low-light conditions and adverse weather, which limits their effectiveness. Radar serves as a reliable and low-cost sensor that can effectively complement these limitations. However, radar-based object detection has been underexplored due to the inherent weaknesses of radar data, such as low resolution, high noise, and lack of visual information. In this paper, we present TransRAD, a novel 3D radar object detection model designed to address these challenges by leveraging the Retentive Vision Transformer (RMT) to more effectively learn features from information-dense radar Range-Azimuth-Doppler (RAD) data. Our approach leverages the Retentive Manhattan Self-Attention (MaSA) mechanism provided by RMT to incorporate explicit spatial priors, thereby enabling more accurate alignment with the spatial saliency characteristics of radar targets in RAD data and achieving precise 3D radar detection across Range-Azimuth-Doppler dimensions. Furthermore, we propose Location-Aware NMS to effectively mitigate the common issue of duplicate bounding boxes in deep radar object detection.

Robust multiobject tracking using mmwave radar-camera sensor fusion

Abstract

With the recent hike in the autonomous and automotive industries, sensor-fusion-based perception has garnered significant attention for multiobject classification and tracking applications. Furthering our previous work on sensor-fusion-based multiobject classification, this letter presents a robust tracking framework using a high-level monocular-camera and millimeter wave radar sensor-fusion. The proposed method aims to improve the localization accuracy by leveraging the radar's depth and the camera's cross-range resolutions using decision-level sensor fusion and make the system robust by continuously tracking objects despite single sensor failures using a tri-Kalman filter setup. The camera's intrinsic calibration parameters and the height of the sensor placement are used to estimate a birds-eye view of the scene, which in turn aids in estimating 2-D position of the targets from the camera. The radar and camera measurements in a given frame is associated using the Hungarian algorithm. Finally, a tri-Kalman filter-based framework is used as the tracking approach. The proposed approach offers promising MOTA and MOTP metrics including significantly low missed detection rates that could aid large-scale and small-scale autonomous or robotics applications with safe perception.

Deep Learning Based Robust Multi-Object Tracking via Fusion of mmWave Radar and Camera Sensors

Abstract

Autonomous driving holds great promise in addressing traffic safety concerns by leveraging artificial intelligence and sensor technology. Multi-Object Tracking plays a critical role in ensuring safer and more efficient navigation through complex traffic scenarios. This paper presents a novel deep learning-based method that integrates radar and camera data to enhance the accuracy and robustness of Multi-Object Tracking in autonomous driving systems. The proposed method leverages a Bi-directional Long Short-Term Memory network to incorporate long-term temporal information and improve motion prediction. An appearance feature model inspired by FaceNet is used to establish associations between objects across different frames, ensuring consistent tracking. A tri-output mechanism is employed, consisting of individual outputs for radar and camera sensors and a fusion output, to provide robustness against sensor failures and produce accurate tracking results.

A Flexible and Accurate Method for Target-based Extrinsic Calibration

Abstract

Advances in autonomous driving are inseparable from sensor fusion. Heterogeneous sensors are widely used for sensor fusion due to their complementary properties, with radar and camera being the most equipped sensors. Intrinsic and extrinsic calibration are essential steps in sensor fusion. The extrinsic calibration, independent of the sensor's own parameters, and performed after the sensors are installed, greatly determines the accuracy of sensor fusion. Many target-based methods require cumbersome operating procedures and well-designed experimental conditions, making them extremely challenging. To this end, we propose a flexible, easy-to-reproduce and accurate method for extrinsic calibration of 3D radar and camera. The proposed method does not require a specially designed calibration environment, and instead places a single corner reflector (CR) on the ground to iteratively collect radar and camera data simultaneously using Robot Operating System (ROS), and obtain radar-camera point correspondences based on their timestamps, and then use these point correspondences as input to solve the perspective-n-point (PnP) problem, and finally get the extrinsic calibration matrix. Also, RANSAC is used for robustness and the Levenberg-Marquardt (LM) nonlinear optimization algorithm is used for accuracy.

Online Targetless Radar Camera Extrinsic Calibration Based on the Common Features of Radar and Camera

Abstract

Sensor fusion is essential for autonomous driving and autonomous robots, and radar-camera fusion systems have gained popularity due to their complementary sensing capabilities. However, accurate calibration between these two sensors is crucial to ensure effective fusion and improve overall system performance. Calibration involves intrinsic and extrinsic calibration, with the latter being particularly important for achieving accurate sensor fusion. Unfortunately, many target-based calibration methods require complex operating procedures and well-designed experimental conditions, posing challenges for researchers attempting to reproduce the results. To address this issue, we introduce a novel approach that leverages deep learning to extract a common feature from raw radar data (i.e., Range-Doppler-Angle data) and camera images. Instead of explicitly representing these common features, our method implicitly utilizes these common features to match identical objects from both data sources. Specifically, the extracted common feature serves as an example to demonstrate an online targetless calibration method between the radar and camera systems. The estimation of the extrinsic transformation matrix is achieved through this feature-based approach. To enhance the accuracy and robustness of the calibration, we apply the RANSAC and Levenberg-Marquardt (LM) nonlinear optimization algorithm for deriving the matrix.

Want a quick tour? I’m happy to share short Loom demos or live notebooks.

🧰 Featured GitHub repos

all software releases of the above projects can also be found here!

🖼️ Gallery

A casual gallery of travel and everyday moments I wanted to keep.

Make time for the unmeasured—quiet, beauty, and the next bend in the road.